Artificial Intelligence (AI) has taken center stage in Google's strategic approach for many years, which was on full display at the Google I/O conference in 2023. The tech giant is constantly pushing the boundaries of what is achievable in AI. With a variety of new products and features unveiled at the event, we can discern the direction Google is moving in terms of AI development.

One of the most groundbreaking announcements was the unveiling of PaLM 2, Google's next-generation large language model (LLM). As an evolution of its predecessors, PaLM 2 is built on advances in compute-optimal scaling, scaled instruction-fine tuning, and improved dataset mixture. The model is engineered to be fine-tuned and instruction-tuned for various purposes, and it's already been integrated into over 25 Google products and features.

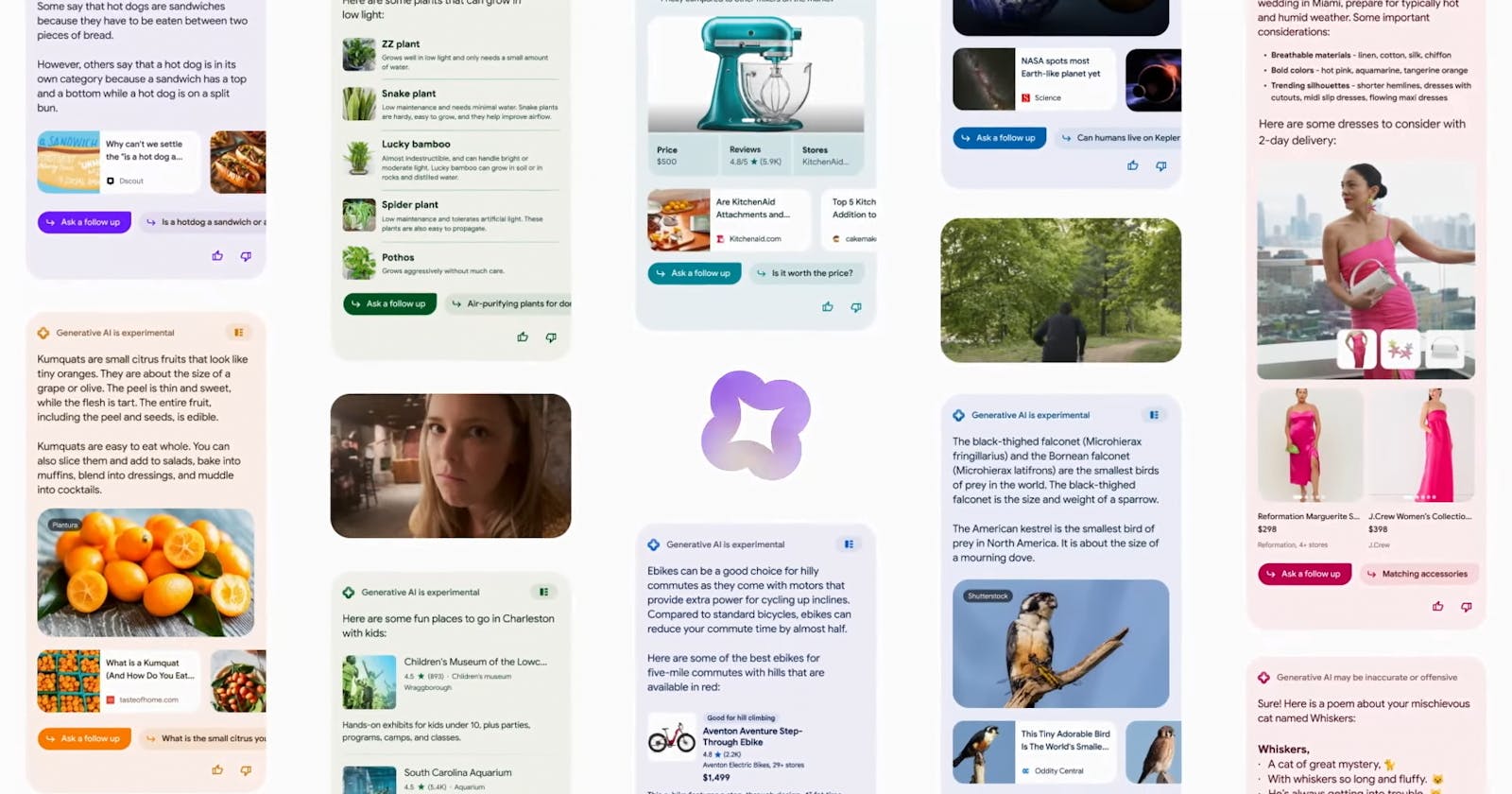

Among the products utilizing PaLM 2 are Bard (available in around 180 countries, excluding EU and others), a tool that boosts productivity and fuels curiosity, and Search Generative Experience, which improves the process of online search by offering AI-powered snapshots of key information. Also noteworthy is Codey, a version of PaLM 2 fine-tuned on source code to function as a developer assistant, providing a broad range of Code AI features including code completions, bug fixing, source code migration, and more. It's evident that Google is using AI to improve a wide range of user experiences, from general productivity to more specialized tasks like coding.

In the realm of image and video generation, Google has made remarkable strides with the launch of Imagen and Phenaki. Imagen, a family of image generation and editing models, is being incorporated into multiple Google products including Google Slides and Android's Generative AI wallpaper, showcasing Google's ambition in the realm of AI-powered visuals. Phenaki, on the other hand, is a Transformer-based text-to-video generation model that can synthesize realistic videos from textual prompt sequences, presenting vast possibilities for the future of video content creation.

ARCore's Scene Semantic API is another innovative tool announced at the conference, enabling users to create custom AR experiences based on the features in the surrounding area. This underlines Google's commitment to improving AR experiences through AI technologies.

In the field of speech recognition, Google launched Chirp, a family of Universal Speech Models trained on 12 million hours of speech. Chirp enables automatic speech recognition (ASR) for 100+ languages, emphasizing Google's dedication to inclusivity and accessibility in AI.

Lastly, the launch of MusicLM, a text-to-music model, highlights Google's intention to revolutionize the music industry through AI. MusicLM can generate 20 seconds of music from a text prompt, offering a fascinating glimpse into the future of AI-assisted music creation.

Thoughts

In my opinion, Google's AI development direction seems to be characterized by two main themes: improving user experiences across various domains and pushing the boundaries of what AI can achieve. The company is clearly not just focusing on one aspect of AI but is instead leveraging it in every possible way to create better, more intuitive products and services.

However, it's important to consider the potential challenges and ethical considerations as AI continues to advance. Issues such as data privacy, the risk of AI systems being misused, and the potential impact on jobs and society at large are all valid concerns that need to be addressed. As we move forward into this new era of AI, it's essential for companies like Google to take the lead in ensuring that these technologies are developed and used responsibly.

The end